This is the second part of the article on requirements testing, based off of an online course called “Testing without requirements”. As you may remember, Part 1 was dedicated to composition, functionalities, and external interfaces of a product; Part 2 will now cover its qualities (characteristics, properties).

System qualities

In order to have a full, exhaustive description of a system, make sure that the product requirements document covers the following topics:

- installation requirements,

- system availability,

- system reliability,

- data integrity,

- system security,

- system safety,

- performance,

- interoperability,

- usability.

Let’s take a look at every single one of these points and create a set of checklists that could be used to quickly identify whether all of the qualities of a system have been described, and described in full at that.

I should also mention here that, many a time, different qualities of a product correspond to different areas of testing, and therefore, get tested by different QA specialists. For example, you may not be in charge of checking the system’s performance – so, if some data is missing for that particular part, or if it’s not something you’re specifically trained in, it doesn’t necessarily mean that you will still have to dive deep into that topic. Or vice versa, if you’re someone who actually specialises in that domain, then checklists that you prepare on a daily basis are usually much, much longer. As such, a kindly reminder: my aim with this article is to show you what good information coverage looks like in general, without going into too much detail.

1. Installation requirements

They determine how easy it is to install your software; of course, this type of requirements is only relevant and necessary if your product is supposed to be installed.

Example: A user without any specific skills and/or training must be able to install the application in 15 minutes or less. Here, you have both the requirement concerning the staff’s level of knowledge, and the result that can be quantified in minutes.

A checklist of things that must be described:

- initial installation process as well as software removal/uninstall process,

- recovery process following a partial or interrupted installation,

- process of reinstalling the same version, installing a new one, or reverting to a previous one,

- possibility to install/reinstall/uninstall additional components and updates, along with corresponding processes,

- app’s behaviour after successful/failed installation,

- rights, permissions and privileges granted to the installer app,

- execution time of different operations and an acceptable number of errors that may occur during the process,

- applications that should be installed or closed before starting the installation process.

2. System availability

This is the period of time (usually scheduled) during which a system is actually available for use and is fully operational. You should keep in mind that some parts of the system can be particularly important not only to users, but to your company as well – this is why, if something happens to those parts, or if they break for whatever reason, it is bound to cause major problems.

Example: The booking module must be available at least 99% of the time, every weekday between 7:00 AM and 12:00 PM UTC.

Ideally, the description of such parts (or modules, as in the example) must always include:

- system availability requirements (recovery time after a crash or a failure, maintenance schedule),

- system maintenance / downtime notification process, as well as approved wordings for respective notification messages.

3. System reliability

This is the probability that your system will work without any issues, and stay fully operational, within a specified time frame. You can see that this quality is quite similar to availability, however, the latter mostly refers to stable performance and recovery, whereas in case with the former, the focus shifts to malfunctions and software unavailability due to possible emergency cases.

Example: The system must not be unavailable for more than 60 seconds per every 24 hours of operation.

Therefore, you should check that there exists a definition of:

- system reliability criteria,

- failure recovery requirements,

- critical failure criteria,

- parts of the system that are most likely to be affected by failures.

4. Data integrity

This is the assurance and maintenance of data accuracy and consistency, as well as the prevention of loss of the information that has been entered into the system. Basically, it’s how well your system protects the data from discrepancies and damage caused by, let’s say, an emergency shutdown, an input error, or a retrieval of files from an archive.

Example: The product must have a daily check-up feature that scans the system for unauthorised deletion and/or modification of data.

A checklist of things that must be described:

- data editing process,

- requirements regarding physical security of computers and external storage devices,

- data archiving requirements (which data should be archived, when, for how long, what are the requirements for its deletion),

- requirements regarding the archiving process itself (how frequently it should be performed, automatically and/or upon request, which files and databases are concerned, where data should be moved to, should data be compressed and checked before it’s archived or not, etc.),

- requirements for recovering data from an archive.

5. System security

Or, in other words, a set of measures implemented to prevent, and block, unauthorised access to information with intent to view, edit, and/or delete it. This includes not only the requirements for physical security of your servers, for example, but also the management of user rights and permissions.

Example: If a user enters an incorrect password 3 times in a row within a 10-minute time frame, their accounts gets blocked for 24 hours, and the system’s operator receives a notification about potentially suspicious login attempts.

For this section, make sure that there exists a definition of:

- access levels for different types of data and functional modules of the system,

- system protection (anti-virus) requirements,

- user environment protection requirements,

- system response to unauthorised attempts to access the system.

6. System safety

Not to be mistaken with system security mentioned above: if anything, system safety is actually system security in reverse. It refers to protection measures that prevent a system from causing harm to people and/or damaging their possessions. When you’re analysing the product’s safety levels, make sure to check that it is protected from unwanted, prohibited, and dangerous data entering it from the outside, and that there is a description of the system’s response to such cases of violation.

Example: The application must detect and immediately block all media that features prohibited content, such as posts referring to illegal activity or videos with disturbing imagery. Or, if we’re talking about some sort of machinery or a robot, safety checks can include verifying that its users don’t run a risk of getting an electric shock or any other kinds of injuries, and that it’s just as safe for people as it is for the environment.

A checklist of things that must be described:

- ways of using a product that can be potentially dangerous, along with detection and response measures,

- maximum acceptable failure rate that could cause damage,

- types of failures that could be harmful to people and/or their possessions,

- actions taken by the operator that could unintentionally cause harm to people and/or damage their possessions,

- modes of operation that could present a risk of harm to people and/or their possessions.

7. Performance

This is the speed of a system’s response to user requests and actions.

Example: Considering that the average internet speed is 30 Mbps, the page load speed must be less than, or equal to, 1.5 seconds.

In fact, the term “performance” encompasses mush more than just a request processing speed; this is why you should check whether the following points have all been defined in the documentation:

- the time it takes for your system to react to different operations performed by a user,

- system’s bandwidth / its information-carrying-capacity,

- data capacity,

- system’s behaviour in reduced functionality mode, as well as under high load / overload conditions.

8. Interoperability

Or, the capacity of your product to exchange data with other systems and/or integrate with external components.

Example: The system must support integrations with PayPal and Stripe so that the users have the possibility pay for their order online.

Actually, if you followed along with the steps from the first part of this article (and now have either a Component-based model or an External services interaction model of your product), then you can pretty much consider this check-up done. However, if there’s a need for a more low-level kind of analysis, ensure that there’s a proper description of:

- all external systems that your product must interact with,

- all data and services that will be exchanged between the systems,

- all data types and file formats that will be used in these exchanges,

- all hardware components that your product must interact with,

- all codes and messages that your product must receive from other systems and/or devices, and interpret/process them afterwards,

- standard connection protocols required for establishing the interoperation of multiple systems,

- obligatory interoperability requirements that were imposed by external systems and must be met by your product.

9. Usability

This includes all qualities of your software that make it possible for any given user to run it in order to achieve their particular objectives in a specified environment, with a sufficient level of efficiency, performance, and satisfaction.

Example: A user without any specific skills and/or training must be able to book and pay for their ticket online in 7 minutes or less.

It’s important to note that, normally, usability can’t be fully reflected in the requirements – it’s rather a matter of concrete design decisions that the team makes along the way. However, it is still worth it to check that the following points are described in the documentation:

- usability criteria (quantitative data),

- different techniques that can help users to speed up their work with(in) the system,

- user training requirements,

- requirements concerning the prevention of operational errors,

- requirements concerning text messages/notifications for users,

- UI guidelines (or links to them).

Prioritisation

You have probably noticed that, when it comes to documenting various qualities of a product, the task oftentimes gets neglected – or is left up to the team’s architect / system admin / designer / some other specialist.

However, if neither product information nor a proper specialist are in sight, you are most likely to be the one who’s going to have to gather all necessary inputs. To do that, it’s better to:

- Clarify whether you actually need to test the requirements that concern the product’s qualities;

- If the answer is “It would’ve been nice…” – define what level of detail is expected from the descriptions that you have to provide;

- Prioritise the qualities based on their importance – make sure to do this before starting the main task, so that you can avoid wasting time on looking for and recovering the data that is not necessary for this particular project.

Of course, you can check what the current priorities are with your team lead, project manager, or the client’s contact person – but, if these options are not available to you for one reason or another, you can try your hand at prioritising everything on your own.

One of the many prioritisation techniques is called Pairwise comparison, or Paired comparison analysis. Let’s take a quick look at how it can be put into practice.

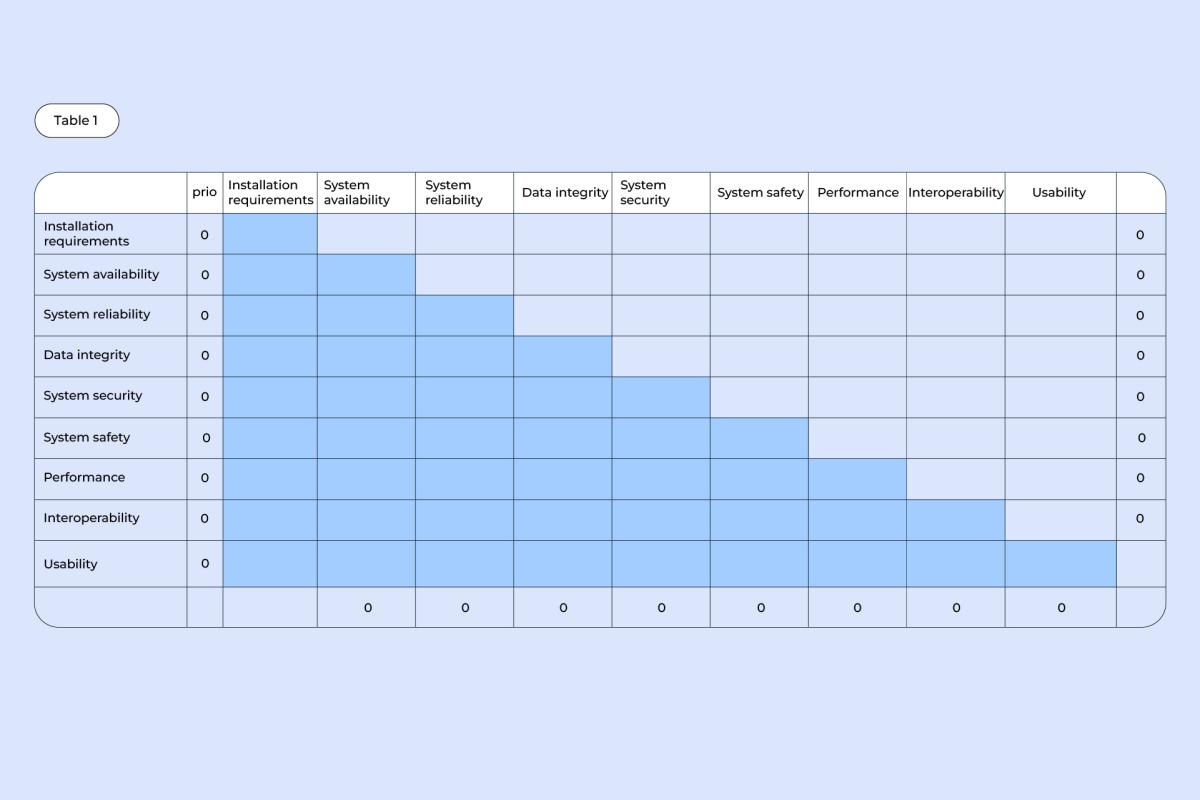

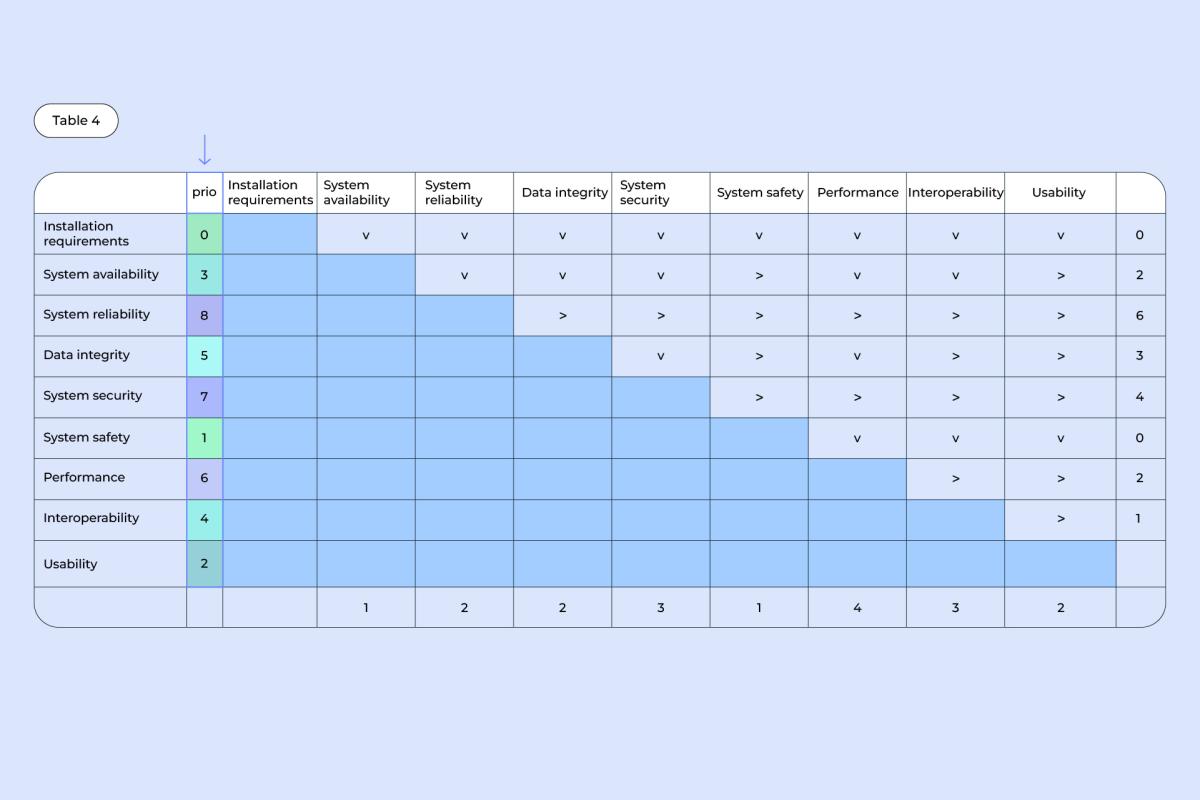

1. Write down all of the qualities – first in a column, then in a row, creating a table like the one shown below:

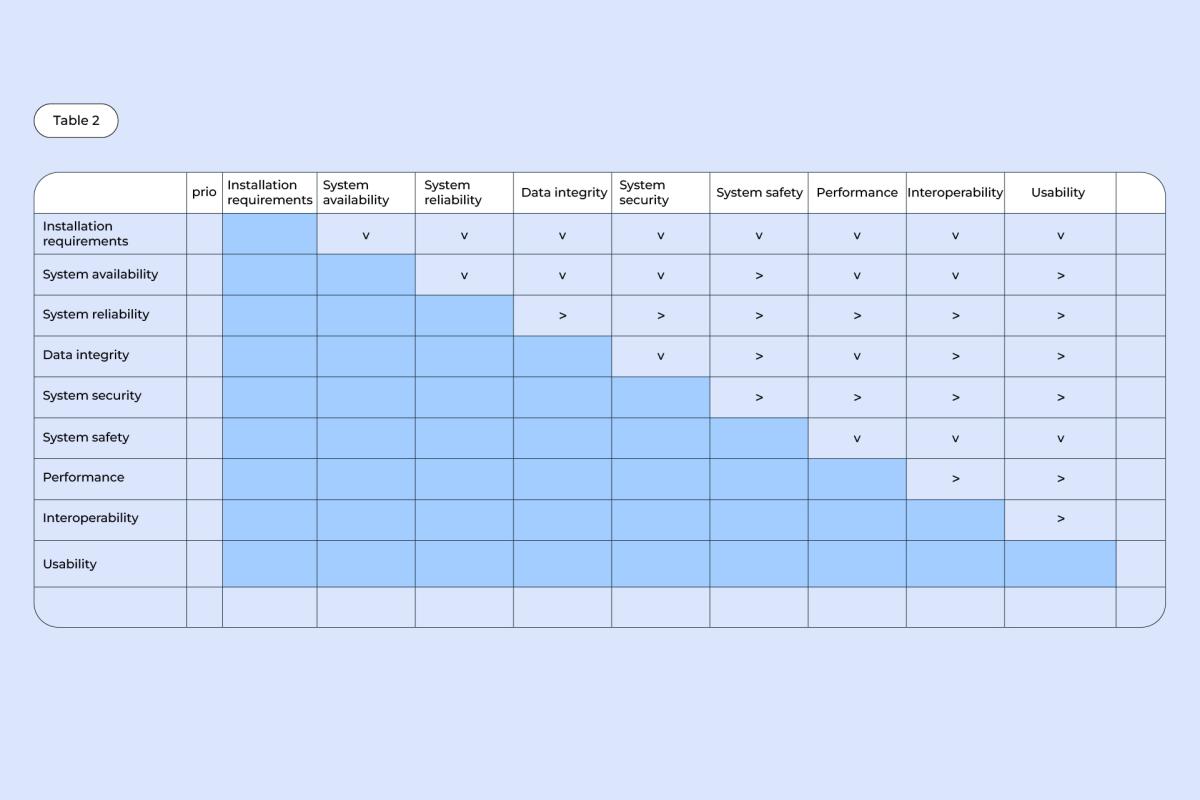

2. Compare each quality from the column with each and every quality from the row. Note which quality of the pair is more important: if it’s the one from a column, draw an arrow pointing downwards (v), if it’s the one from a row, draw an arrow pointing rightwards (>).

Example: System availability is more important than installation requirements (v), system reliability is more important than interoperability (>).

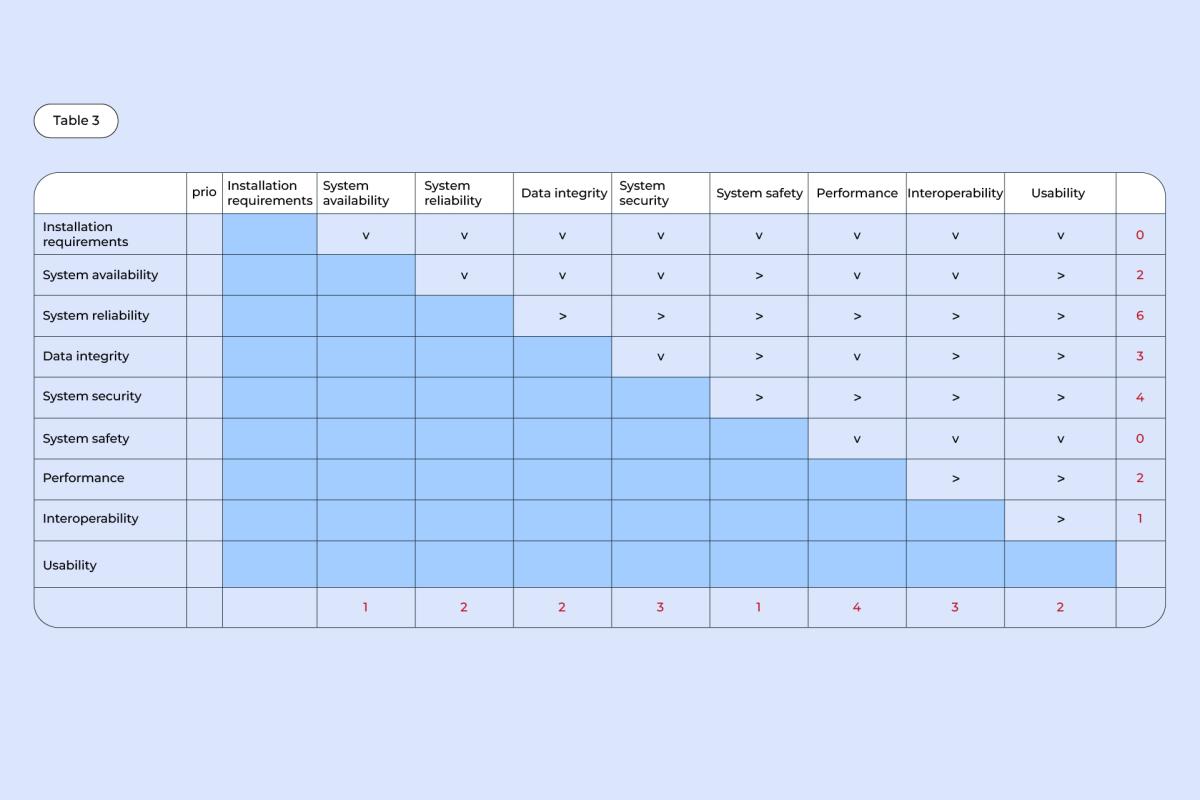

3. In the rightmost column, put down a number corresponding to the number of (>) arrows in each row. Then, do the same with the bottom row – count how many (v) arrows there are in each column and put down a corresponding number underneath.

4. For every quality, add up the numbers from their respective columns and rows, and put the total into the “Prio” column.

Example: For data integrity, there are 2 (v) arrows in a column and 3 (>) arrows in a row, making it a 5 in total.

Once all adding up is done, you end up with a clear list of priorities, ranging from 0 for the lowest priority, to 8 for the highest.

And with that, my talk about the assessment of product requirements is done!

Let’s sum up all of the things you’ve learned how to do:

- verify whether all of the necessary information about the product is documented,

- identify which data is missing,

- define the importance, necessity, and priority of particular types of requirements.